Machine Learning A-Z: Part 2 – Regression (Random Forest Regression)

Random Forest Intuition

Ensemble Learning

STEP 1: Pick at random K data points from the Training set.

STEP 2: Build the Decision Tree associated to these K data points.

STEP 3: Choose the number Ntree of trees you want to build and repeat STEPS 1 & 2.

STEP 4: For a new data point, make each one of your Ntree trees predict the value of Y to for the data point in question, and assign the new data point the average across all of the predicted Y values.

e.g. A wild guessing game using a jar with jellybeans in it.

Calculate the average of many wild guesses.

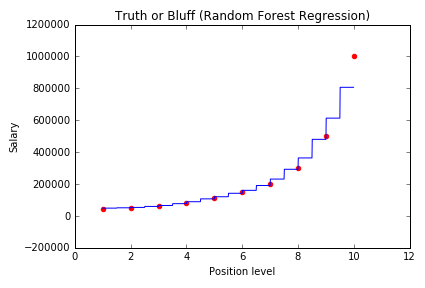

Random Forest Regression

Python

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

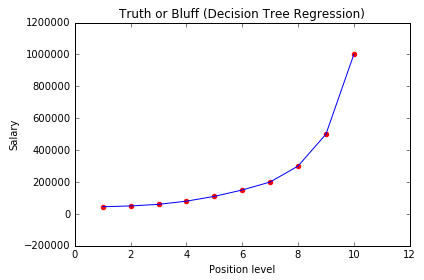

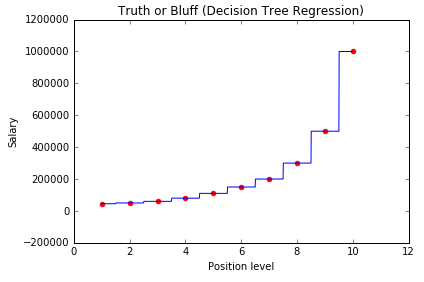

# Random Forest Regression # Importing the libraries import numpy as np import matplotlib.pyplot as plt import pandas as pd # Importing the dataset dataset = pd.read_csv('Position_Salaries.csv') X = dataset.iloc[:, 1:2].values y = dataset.iloc[:, 2].values # Splitting the dataset into the Training set and Test set """from sklearn.cross_validation import train_test_split X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0)""" # Feature Scaling """from sklearn.preprocessing import StandardScaler sc_X = StandardScaler() X_train = sc_X.fit_transform(X_train) X_test = sc_X.transform(X_test) sc_y = StandardScaler() y_train = sc_y.fit_transform(y_train)""" # Fitting the Random Forest Regression to the dataset from sklearn.ensemble import RandomForestRegressor regressor = RandomForestRegressor(n_estimators = 300, random_state = 0) regressor.fit(X, y) # Predicting a new result y_pred = regressor.predict(6.5) # Visualising the Random Forest Regression results (for higher resolution and smoother curve) X_grid = np.arange(min(X), max(X), 0.01) X_grid = X_grid.reshape((len(X_grid), 1)) plt.scatter(X, y, color = 'red') plt.plot(X_grid, regressor.predict(X_grid), color = 'blue') plt.title('Truth or Bluff (Random Forest Regression)') plt.xlabel('Position level') plt.ylabel('Salary') plt.show() |

R

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

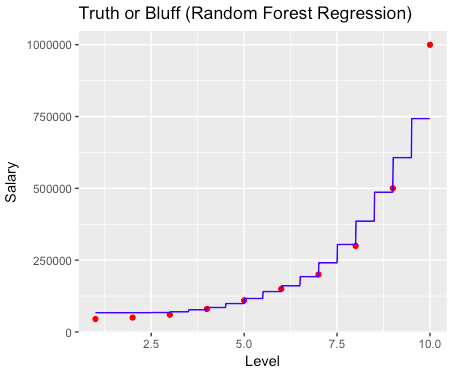

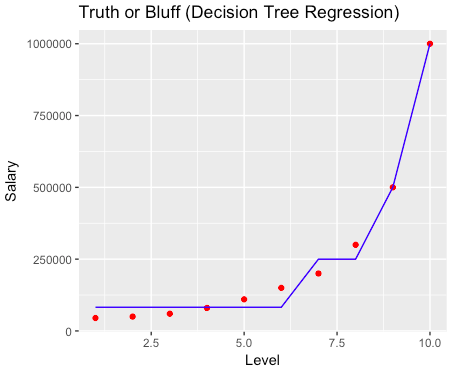

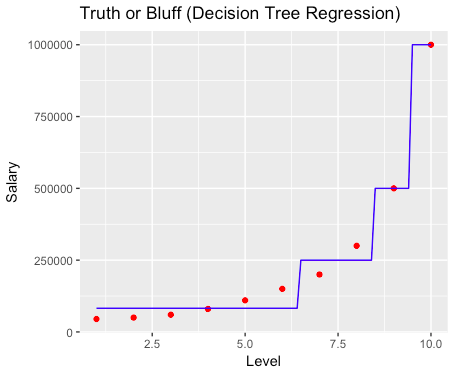

# Random Forest Regression # Importing the dataset dataset = read.csv('Position_Salaries.csv') dataset = dataset[2:3] # Splitting the dataset into the Training set and Test set # # install.packages('caTools') # library(caTools) # set.seed(123) # split = sample.split(dataset$Salary, SplitRatio = 2/3) # training_set = subset(dataset, split == TRUE) # test_set = subset(dataset, split == FALSE) # Feature Scaling # training_set = scale(training_set) # test_set = scale(test_set) # Fitting the Random Forest Regression to the dataset # install.packages('randomForest') library(randomForest) set.seed(1234) regressor = randomForest(x = dataset[1], y = dataset$Salary, Ntree = 500) # Predicting a new result y_pred = predict(regressor, data.frame(Level = 6.5)) # Visualising the Random Forest Regression results (for higher resolution and smoother curve) # install.packages('ggplot2') library(ggplot2) x_grid = seq(min(dataset$Level), max(dataset$Level), 0.01) ggplot() + geom_point(aes(x = dataset$Level, y = dataset$Salary), colour = 'red') + geom_line(aes(x = x_grid, y = predict(regressor, newdata = data.frame(Level = x_grid))), colour = 'blue') + ggtitle('Truth or Bluff (Random Forest Regression)') + xlab('Level') + ylab('Salary') |